Cobra Agent

ML Engineering without a Human in the Loop

Dalpha Agent Team

Dalpha Agent Team

Dalpha is a startup that provides an Agentic OS for commerce brands. Since its founding in 2023, our team has been developing an internal product called Cobra, a system designed to automatically generate and optimize custom AI models tailored to each client's unique data and business logic. Through this journey, we have accumulated deep expertise in developing agents that automate the machine learning engineering workflow end-to-end.

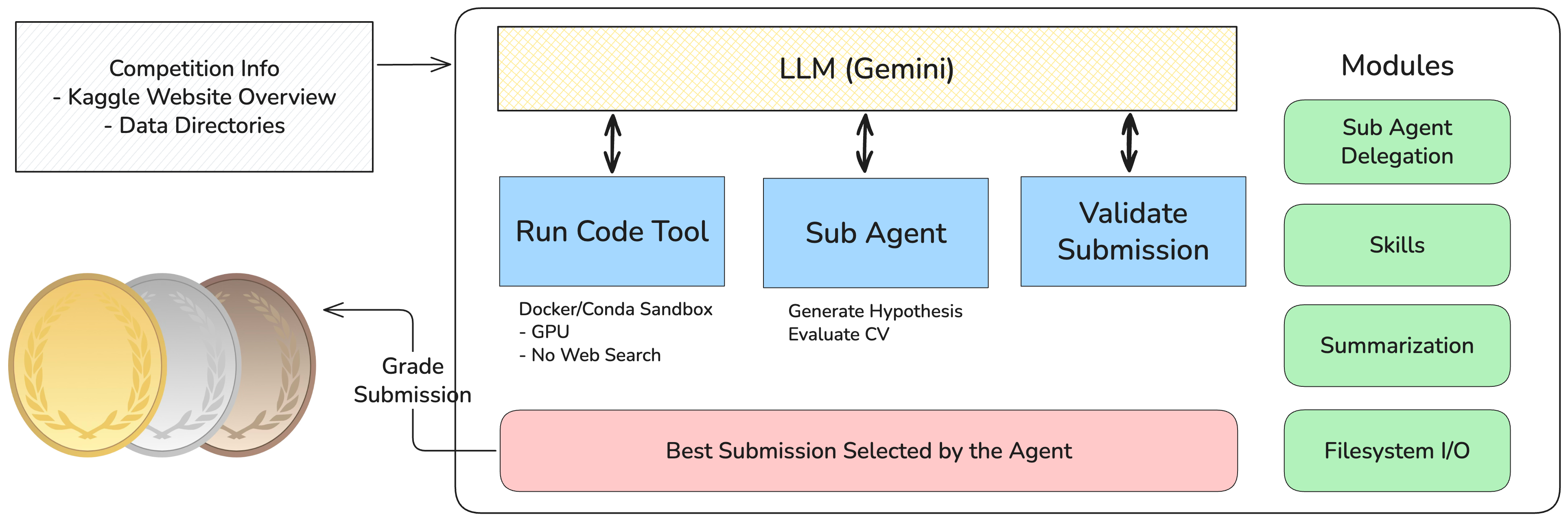

Cobra Agent is the culmination of that research. It is a fully autonomous machine learning engineering agent that takes a raw dataset and a problem description, then independently explores, builds, evaluates, and refines machine learning pipelines without any human intervention. Built on a stack of composable modules spanning sandboxed code execution and autonomous sub-agent orchestration, Cobra Agent operates within a persistent iterative loop, continuously improving its models until it achieves strong results or exhausts the time budget.

Cobra Agent is given a competition description and dataset, then autonomously writes, executes, and evaluates code in a sandboxed environment. The agent assesses candidate pipelines using internal validation signals such as holdout splits and cross-validation where appropriate, and selects submissions without relying on leaderboard or hidden test-set feedback.

All code runs in isolated Docker or Conda environments with strict filesystem partitioning —

/data/ is read-only, /workspace/ is read-write, no web search is performed during

execution; pretrained model weights

may be downloaded at runtime.

Dynamically spawns specialized sub-agents to parallelize tasks like EDA, feature engineering, and model training — similar to how tools like Claude Code delegate subtasks internally.

Continuously refines models through a hypothesize-implement-evaluate cycle. The agent carefully manages labeled training and validation data, compares candidates using internal validation signals, and selects the best-performing candidate for final submission.

Modular architecture with pluggable components for context summarization, prompt caching, file I/O, task management, and sub-agent orchestration — each independently replaceable and extensible.

Custom tools — run_code_async, wait, check_log, and

stop_process — enable efficient parallel workloads. The agent launches long-running tasks

(e.g., model training) in the background, monitors logs incrementally, and stops or restarts processes based

on real-time metrics, maximizing GPU utilization and overall throughput.

We evaluate Cobra Agent on MLE-Bench, a benchmark suite by OpenAI consisting of 75 real-world Kaggle competitions spanning tabular data, computer vision, NLP, and more.

Representative agent-generated code from our runs is available in our artifact repository.

| Agent | LLM(s) | Low (%) | Medium (%) | High (%) | All (%) | Time (h) | Date |

|---|---|---|---|---|---|---|---|

| Cobra Agent (Ours) | Gemini 3.1 Pro Preview, Gemini 3 Flash Preview |

86.36 | 81.58 | 62.22 | 79.11 | 24 | 2026-03-17 |

All results use the MLE-Bench standard evaluation protocol.

| Model | Gemini 3.1 Pro Preview, Gemini 3 Flash Preview |

|---|---|

| GPU (Config A) | A100 SXM ×1 — 80 GB VRAM, 11 vCPUs, 128 GB RAM |

| GPU (Config B) | RTX 4060 — 16 GB VRAM, 16 vCPUs, 32 GB RAM |

| Time Limit | 24 hours per competition |